Anthropic: A Technical and Business Model Analysis

By SteveLo — Developer of ccusage_go March 25, 2026

This analysis examines Anthropic’s technical capabilities, business model, and pricing architecture based on publicly available data, API documentation, academic research, GitHub issues, and first-party token usage analysis using ccusage_go.

All claims are sourced. All numbers are verifiable.

1. Core Technical Capability Assessment

What Anthropic Builds In-House

Anthropic develops a family of large language models (LLMs) called Claude. These models process and generate text. This is Anthropic’s sole proprietary technology.

10 Tips to Stop Claude Code From Burning Your Money and Ignoring Your Instructions

By SteveLo — Developer of ccusage_go

Everyone’s raving about how Claude Code + Codex CLI is the dream combo. “Use Claude to build, Codex to review!” “Two AIs are better than one!”

I hate to break it to you — but the reason this works has nothing to do with Codex being smarter. It’s because Codex gives you a fresh context with zero cache.

That’s right. Your Claude Code isn’t getting dumber because the model is bad. It’s getting dumber because the cache architecture is slowly poisoning it. And Codex “fixes” it simply by not having that problem.

The Cache Trap: How Claude Code's Architecture Costs You 30x More While Making the Model Worse

By SteveLo — Developer of ccusage_go March 25, 2026

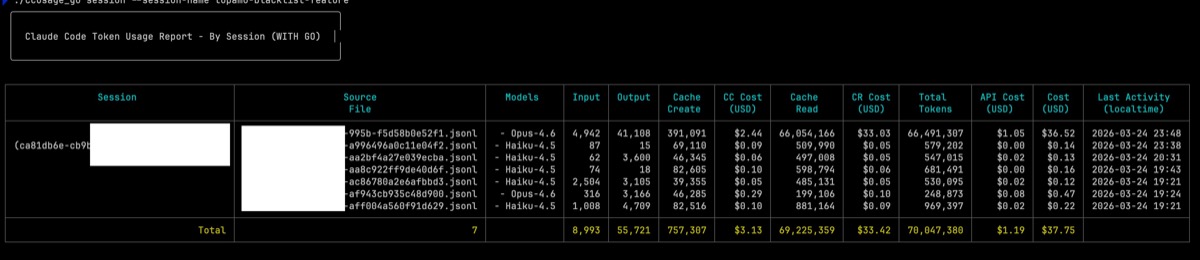

I’ve been a Claude Code Max 20x subscriber ($200/month). I’m also the developer of ccusage_go, an open-source Go tool that parses Claude Code’s local JSONL session logs to calculate real token usage and costs.

What I found made me cancel my subscription.

TL;DR

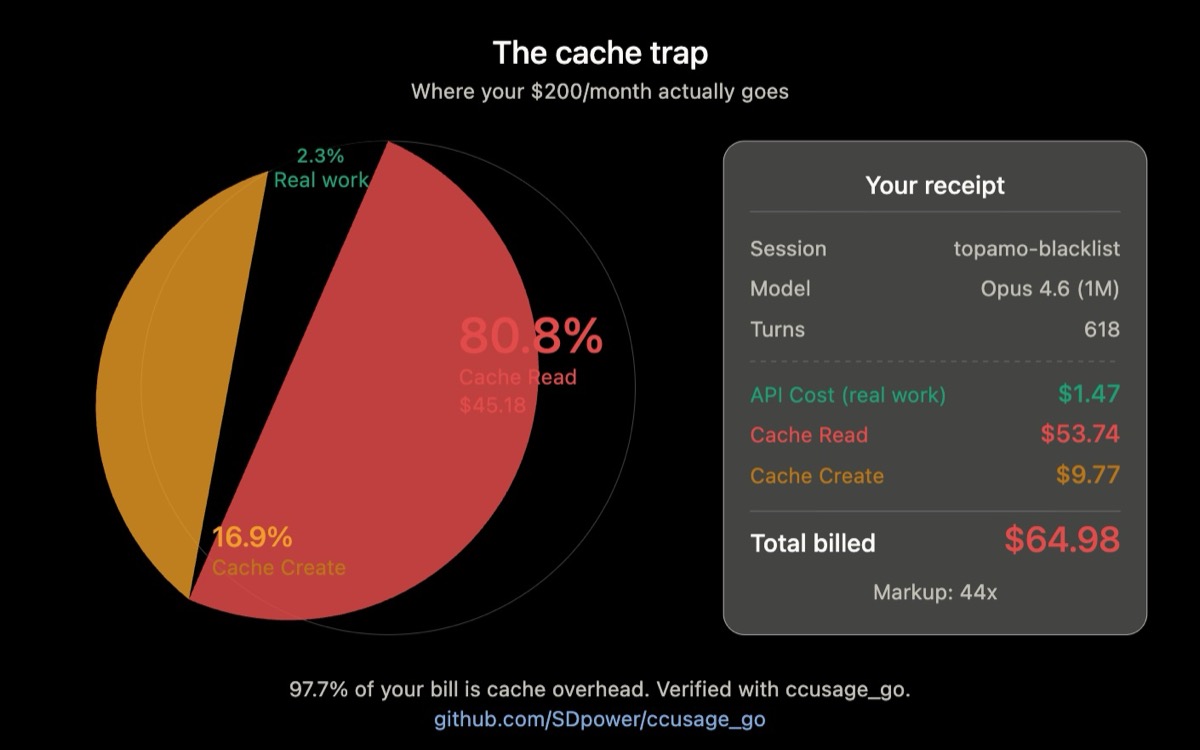

- Claude Code charges you for Cache Read and Cache Create tokens that make up 97.7% of your bill — the actual productive work (API Cost) is only 2.3%.

- One single session cost $55.94. My entire monthly subscription is $200. Three sessions and I’m done.

- The cache architecture doesn’t just cost more — it actively degrades the model’s ability to follow your instructions, creating a vicious cycle where you retry (and pay more) because the model stops listening.

- Boris Cherny (@boris_cherny), Head of Claude Code, promotes workflows on an internal Anthropic account with no quota limits. Paying users who follow his advice get burned.

- Anthropic has never publicly disclosed how cache tokens count against Max subscription quotas.

Part 1: The Numbers Don’t Lie

One Session, Exposed

Using ccusage_go’s new CC Cost / CR Cost / API Cost breakdown on a single session (topamo-blacklist-feature):

Anthropic’s ‘Department of War’ Statement: Moral Branding vs. Security Reality

Primary source: Anthropic News: Statement on Department of War

Anthropic’s statement positions the company as a partner to democratic governments while drawing hard lines on specific uses (domestic mass surveillance and fully autonomous weapons). As policy messaging, it is highly polished: it presents national-security cooperation, ethical constraints, and institutional responsibility in one coherent narrative.

The tension appears when that narrative is compared with events from the past year. Claude has repeatedly been framed as a safety-focused model, yet multiple public incidents suggest recurring misuse and extraction pressure. In that context, the statement can be read not only as an ethical position, but also as narrative repositioning: shifting attention from “our model has been abused” to “we are principled gatekeepers.”

Claude Code Agent Teams: The New Frontier of Multi-Agent Collaboration

Recommended Prerequisites

This article covers Claude Code’s experimental Agent Teams feature. If you’re not yet familiar with the basics of Subagents, consider reading these articles first:

Have you ever faced this scenario? A large refactoring task where you need to simultaneously analyze security implications, performance impact, and test coverage — but a single Agent can only focus on one thing at a time. While Subagents can divide work, they operate like a “relay race”: completing tasks and throwing results back, unable to communicate directly with each other.

Claude Code 2.0.41-2.0.47 Release Notes

📋 Version Overview

This article summarizes the important updates in Claude Code versions 2.0.41 through 2.0.47, covering new features, improvements, and bug fixes across six releases.

🎯 Highlights

- ✨ PermissionRequest Hook: Automated permission approval mechanism

- ☁️ Azure AI Foundry Support: New cloud platform integration

- 🔧 Subagent Hooks Enhancement: More complete lifecycle control

- 🚀 Background Task Sending: Support for background execution on web

- 🐛 Extensive Bug Fixes: Improved stability and user experience

✨ New Features

1. PermissionRequest Hook (v2.0.45)

The new PermissionRequest Hook allows you to automatically approve or deny tool permission requests using custom logic, significantly improving automation workflow efficiency.

Claude Code Hooks System and Migration Guide: Workflow Automation and Smooth Upgrades

Claude Code 2.0 Core Features Deep Dive Series

This is Part 3 (Final) of the series. The complete series includes:

- Skills and Sandbox - Enhancing Extensibility and Security

- Subagents and Plan Mode - The New Era of Intelligent Collaboration

- Hooks System and Migration Guide - Workflow Automation and Smooth Upgrades (This Article)

Claude Code 2.0.30 introduces the powerful Prompt-based Stop Hooks feature, along with important migration requirements: Output Styles deprecation and SDK updates. This article provides an in-depth exploration of the Hooks system’s complete applications and detailed migration guides to ensure smooth upgrades to the latest version.

Claude Code Subagents and Plan Mode: The New Era of Intelligent Collaboration

Claude Code 2.0 Core Features Deep Dive Series

This is Part 2 of the series. The complete series includes:

- Skills and Sandbox - Enhancing Extensibility and Security

- Subagents and Plan Mode - The New Era of Intelligent Collaboration (This Article)

- Hooks System and Migration Guide - Workflow Automation and Smooth Upgrades

Claude Code 2.0.28 introduces the revolutionary Plan Subagent and enhanced Subagent system, combined with v2.0.21’s Interactive Questions feature, pioneering a new collaborative model for AI-assisted development. This article provides an in-depth exploration of Subagent enhancements, Plan Mode mechanisms, and how to build smarter development workflows through interactive Q&A.

Claude Code Skills and Sandbox: Enhancing Extensibility and Security

Claude Code 2.0 Core Features Deep Dive Series

This is Part 1 of the series. The complete series includes:

- Skills and Sandbox - Enhancing Extensibility and Security (This Article)

- Subagents and Plan Mode - The New Era of Intelligent Collaboration

- Hooks System and Migration Guide - Workflow Automation and Smooth Upgrades

Claude Code 2.0.20 introduces two revolutionary features: Skills (skill system) and Sandbox Mode. Skills enable Claude to automatically activate specialized functional modules, greatly enhancing extensibility; Sandbox Mode elevates security to a new level through OS-level isolation. This article provides an in-depth exploration of the principles, configuration, and practical applications of these two features.

Claude Code Output Styles: A Comprehensive Guide to Unlocking AI Assistant's Full Potential

Claude Code’s newly introduced Output Styles feature fundamentally changes how we interact with AI programming assistants. This powerful capability not only breaks Claude Code free from traditional software engineering boundaries but also enables every user to craft personalized AI assistant behaviors tailored to their specific needs.

What are Output Styles?

Output Styles is a revolutionary feature in Claude Code that allows users to directly modify the system prompt, thereby changing Claude’s behavior patterns and response styles. This means you can transform Claude Code from a pure programming assistant into a code review expert, educational tutor, technical support consultant, or even a creative ideation partner.